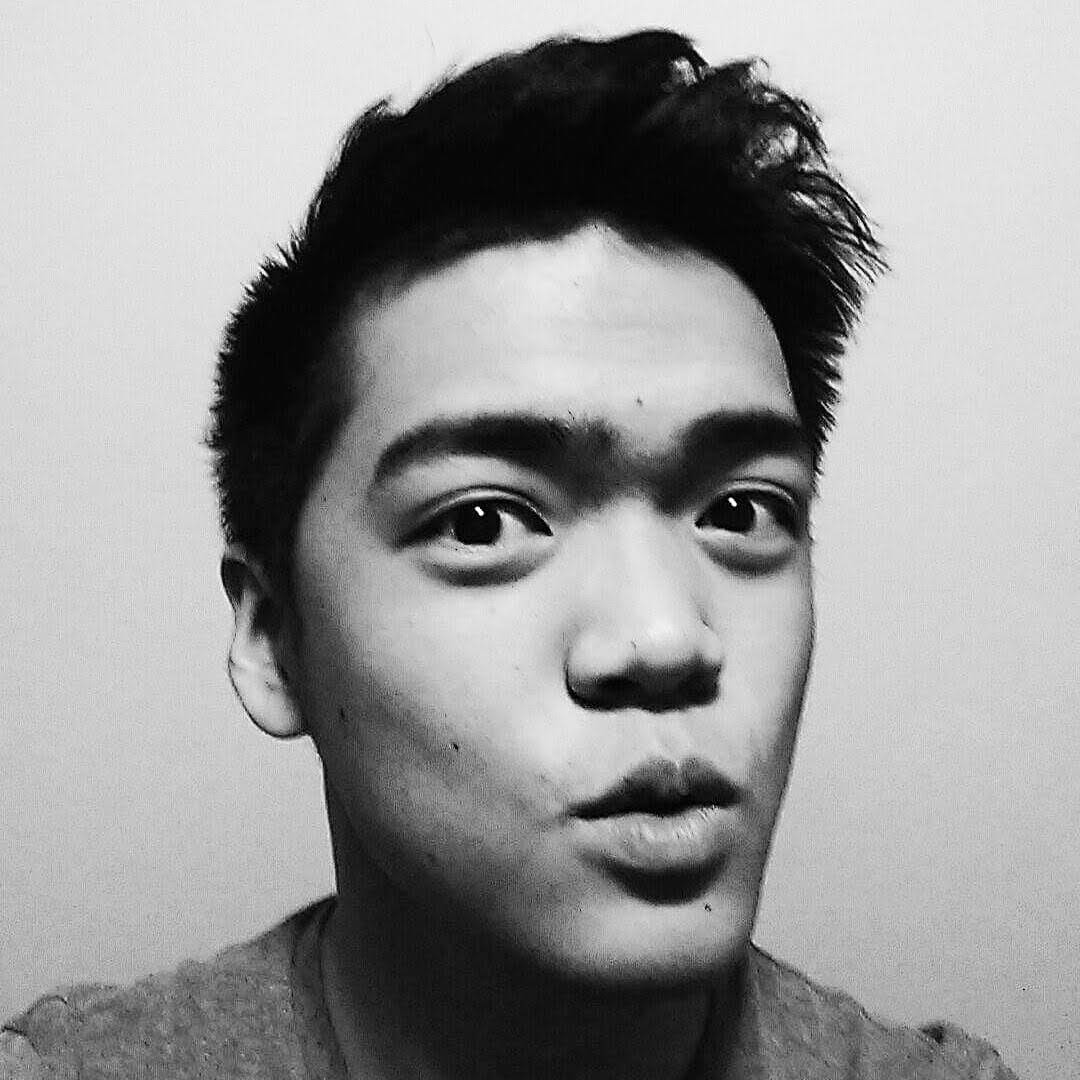

I work on AI for Optimus at Tesla. My PhD at Stanford was advised by Chelsea Finn and Jiajun Wu and supported by a Sequoia Capital Stanford Graduate Fellowship in Science & Engineering and an NSERC Postgraduate Scholarship – Doctoral.

I'm a bilingual Taiwanese-Canadian. In my free time, I like to ski & snowboard and play Soulslike & board games.